Hyper-V Failover Cluster: Affordable HA for the SMB

Next to Office 365, virtualization is still the number one recommendation I make to small businesses. It’s a no-brainer. Enabling Hyper-V opens up so many great opportunities–grow your server’s CPU, RAM or storage on the fly, perform host-level backups, Live Migrate to new hardware with no downtime, use Hyper-V Replica or Azure Site Recovery for DR, eliminate downtime with a Hyper-V failover cluster… The list goes on.

As such, over the last decade, I’ve sold a lot of virtualization solutions–from both VMWare & Microsoft. After 2008 R2 and then 2012, Hyper-V emerged as the clear leader in the SMB space. There is no reason to waste money on anything else. Hyper-V will do everything you need it to, and then some. The best part is: it’s already included in your Windows Server licensing. Who would refuse that deal?

How to make your virtual machines highly available

Do you want to know the secret sauce behind providing highly available IT systems? It goes something like this:

Why buy one when you can get two for twice the price?

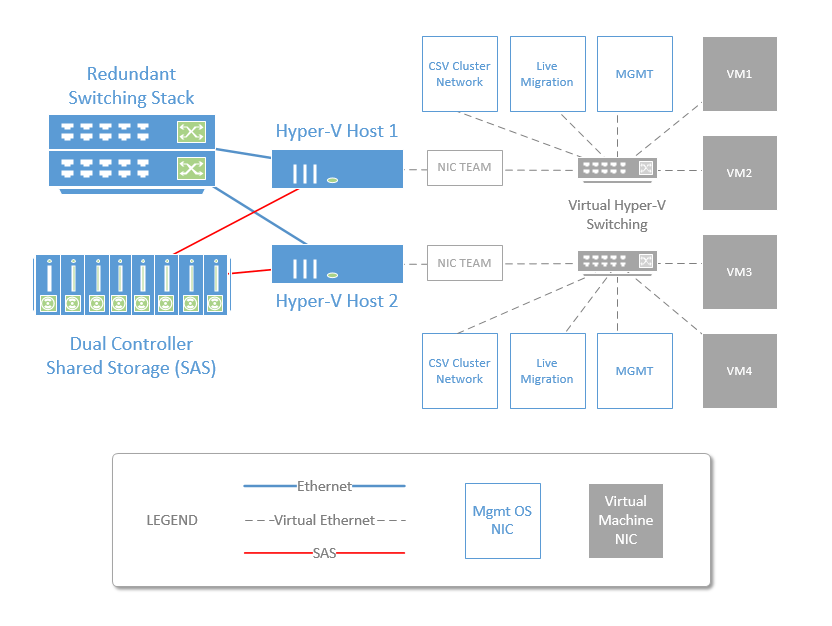

There really isn’t more to it than that. For the SMB, one of the most popular and affordable ways to accomplish this is to deploy two Hyper-V servers, let them share a common set of highly available disks, and cluster the virtualization platform. We call this a “2-2-1” for short (two servers, two physical switches, and one shared storage device).

I’ve been selling this solution (and VMware ones like it) for years now, and I still love its elegance when I share it with a new client. Since the release of Windows Server 2012, Hyper-V has really grown up to provide super cost-effective ways of enabling highly available workloads, and it solves a wide variety of different business problems for organizations of every size and type.

Image credit: itpromentor.com

Note: A 2-2-1 does not protect against failures internal to any given VM, but it does protect against any given hardware-based failure. When the solution is combined with a backup/DR strategy which natively supports virtualization, it is usually pretty easy to “roll back” your virtual machines in the event of a failed, infected or otherwise corrupt virtual partition, thus accomplishing some OS/application-level protection as well.

Let’s break down the various categories of fault tolerance in this diagram:

- I could lose an entire physical Hyper-V host server, and the virtual machines would simply reboot on the other host in a few seconds.

- I could lose any disk, storage path or even an entire storage controller and not miss a single beat of service. Note: Shared storage is the requirement for configuring a failover cluster, and there are many ways to do shared storage these days.* It can be both affordable and fully redundant with dual SAS storage controllers, redundant power connections, and redundant storage paths to each host server.

- I could lose multiple NICs or even a whole switch by setting up two managed switches with trunk ports into the host servers, and configuring Windows Server NIC Teaming–providing for both failover and load balancing of the virtual NIC traffic across all the physical NICs in your system.**

All of this can be done with pretty much any hardware vendor that supports virtualization–take your pick of Dell, HP, Lenovo, etc. There are even “Cluster-in-a-Box” (CiB) solutions produced by various vendors that wrap all these components into a single, affordable rack-mount appliance. These days, Microsoft makes it so damn easy and affordable to set these things up, that it is hard for me to recommend anything else when high availability is part of the conversation (and when isn’t it anymore)?

What if I need more than two servers?

Clusters can support insane numbers of physical (64) and virtual (8,000) servers–but for the vast majority of SMB organizations that I work with, two physical servers is usually plenty. You could easily spec out a server to support several dozen virtual machines, and most SMB’s won’t come close to consuming this.

In our market segment, the bottle neck is not usually in CPU or RAM. Most often, storage is the biggest concern, and the biggest line item for the budget. Storage spaces can help with this, and some hardware vendors have come down in their pricing of entry-level SAS-connected SAN offerings, as well.

Even with IaaS cloud options from Amazon and Azure, Hyper-V continues to be a very attractive and affordable solution for the SMB, and I do not think it is going away any time soon. In the upcoming posts, I will detail how to set up the networking & storage required for building this cluster, including how this process can be automated with PowerShell for deploying it quickly and easily.

Footnotes:

*You could do a “virtual” SAN to avoid a separate physical appliance, but that generally means you buy all of your disks at least twice (and disks are expensive). You can buy them just once by remaining in a physical shared storage solution, which could even be a JBOD, saving further $ against a SAN when combined with Windows Server Storage Spaces. SAS-connected shared storage in general is usually less expensive than buying a Fiber Channel or even iSCSI SAN these days.

**The NIC teaming can be configured entirely within Windows, meaning you do not need fancy networking equipment or specialized skills to accomplish this solution.

Comments (4)

Do you have any specific recommendations on a “Dual Controller Shared Storage (SAS)”? I currently have a Dell PowerEdge R630 as my primary Hyper-V host & an Asus RS500-E6/PS4 as my secondary. Both are using directly attached storage (mirrored 800GB NVMe SSDs on the former & mirrored 400GB SSDs on the latter) but I’m running out of space & would like better availability/redundancy in my setup. Thanks heaps!

You know, I don’t have a favorite here. Some of the more interesting solutions are like the “Cluster in a box” designs–such as those by DataOn. That includes compute nodes as well as storage all-in-one. But for storage enclosures without compute nodes, there are some good options out there, like the Lenovo/IBM Storwize V3700, for example, which is a lower-end, entry level SAN, but perfectly adequate for most SMB’s. EMC VNX/VNXe, NetApp FAS2500, and HP MSA are all in that category also. Many of these would have direct attach SAS as a connectivity option.

Thanks, that gives me an idea of where to start my research.